python 爬取吉首大学网站成绩单

目录

- 项目地址:

- 环境

- 配置及使用

- 结果展示

- 完整代码

项目地址:

https://github.com/chen0495/pythonCrawlerForJSU

环境

- python 3.5即以上

- request、BeautifulSoup、numpy、pandas.

- 安装BeautifulSoup使用命令pip install BeautifulSoup4

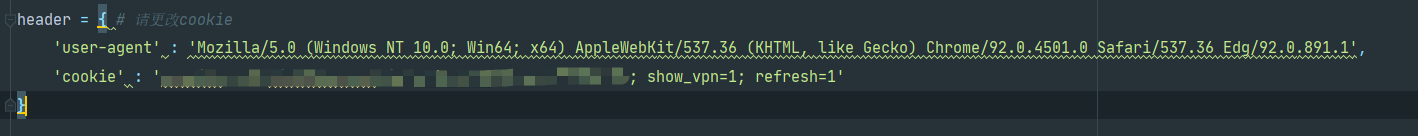

配置及使用

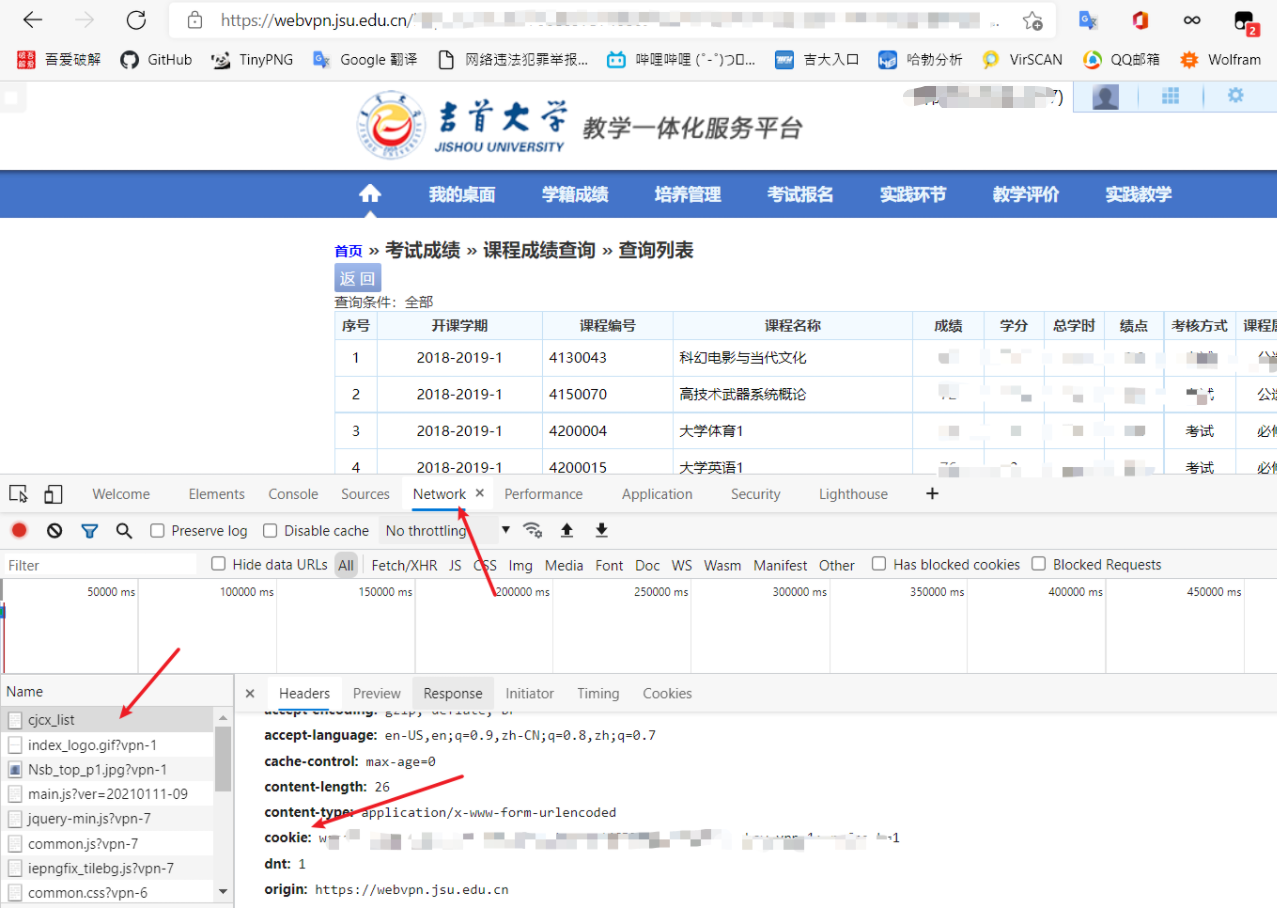

登陆学校成绩单查询网站,修改cookie.

按F12后按Ctrl+R刷新一下,获取cookie的方法见下图:

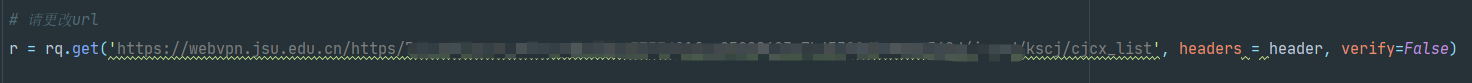

修改爬虫url为自己的成绩单网址.

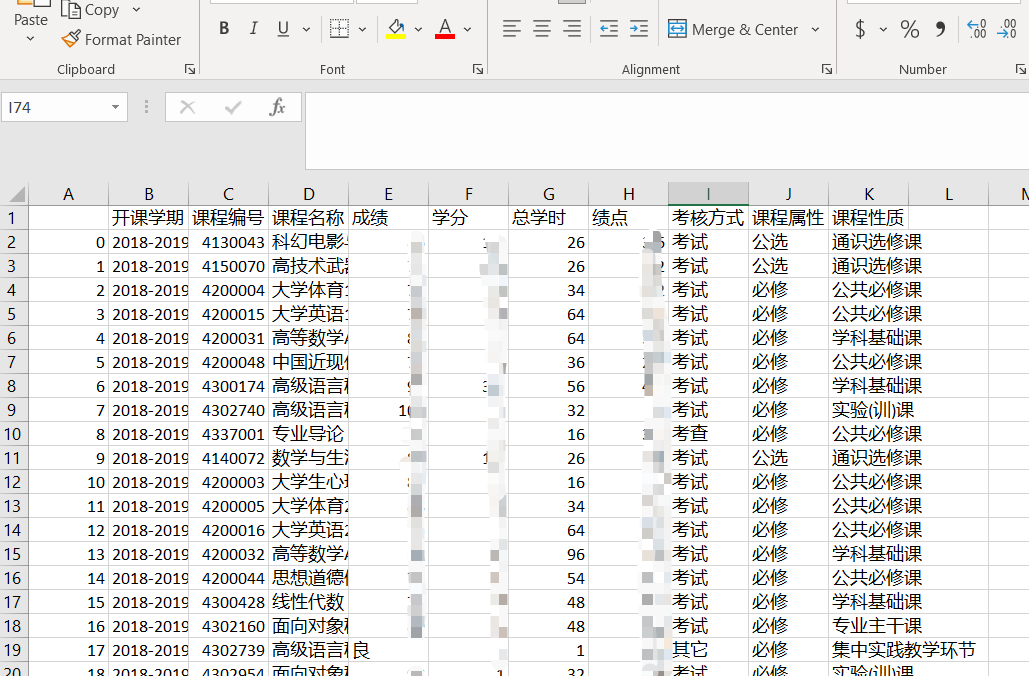

运行src/main.py文件即可在/result下得到csv文件.

结果展示

完整代码

# -*- coding: utf-8 -*-

# @Time : 5/29/2021 2:13 PM

# @Author : Chen0495

# @Email : 1346565673@qq.com|chenweiin612@gmail.com

# @File : main.py

# @Software: PyCharm

import requests as rq

from bs4 import BeautifulSoup as BS

import numpy as np

import pandas as pd

rq.adapters.DEFAULT_RETRIES = 5

s = rq.session()

s.keep_alive = False # 关闭多余连接

header = { # 请更改cookie

'user-agent' : 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/92.0.4501.0 Safari/537.36 Edg/92.0.891.1',

'cookie' : 'wengine_vpn_ticketwebvpn_jsu_edu_cn=xxxxxxxxxx; show_vpn=1; refresh=1'

}

# 请更改url

r = rq.get('https://webvpn.jsu.edu.cn/https/xxxxxxxxxxxxxxxxxxxxxxxxxxxxxx/jsxsd/kscj/cjcx_list', headers = header, verify=False)

soup = BS(r.text,'html.parser')

head = []

for th in soup.find_all("th"):

head.append(th.text)

while '' in head:

head.remove('')

head.remove('序号')

context = np.array(head)

x = []

flag = 0

for td in soup.find_all("td"):

if flag!=0 and flag%11!=1:

x.append(td.text)

if flag%11==0 and flag!=0:

context = np.row_stack((context,np.array(x)))

x.clear()

flag+=1

context = np.delete(context,0,axis=0)

data = pd.DataFrame(context,columns=head)

print(data)

# 生成文件,亲更改文件名

data.to_csv('../result/result.csv',encoding='utf-8-sig')

以上就是python 爬取吉首大学成绩单的详细内容,更多关于python 爬取成绩单的资料请关注hwidc其它相关文章!