我对PyTorch dataloader里的shuffle=True的理解

对shuffle=True的理解:

之前不了解shuffle的实际效果,假设有数据a,b,c,d,不知道batch_size=2后打乱,具体是如下哪一种情况:

1.先按顺序取batch,对batch内打乱,即先取a,b,a,b进行打乱;

2.先打乱,再取batch。

证明是第二种

shuffle (bool, optional): set to ``True`` to have the data reshuffled

at every epoch (default: ``False``).

if shuffle:

sampler = RandomSampler(dataset) #此时得到的是索引

补充:简单测试一下pytorch dataloader里的shuffle=True是如何工作的

看代码吧~

import sys

import torch

import random

import argparse

import numpy as np

import pandas as pd

import torch.nn as nn

from torch.nn import functional as F

from torch.optim import lr_scheduler

from torchvision import datasets, transforms

from torch.utils.data import TensorDataset, DataLoader, Dataset

class DealDataset(Dataset):

def __init__(self):

xy = np.loadtxt(open('./iris.csv','rb'), delimiter=',', dtype=np.float32)

#data = pd.read_csv("iris.csv",header=None)

#xy = data.values

self.x_data = torch.from_numpy(xy[:, 0:-1])

self.y_data = torch.from_numpy(xy[:, [-1]])

self.len = xy.shape[0]

def __getitem__(self, index):

return self.x_data[index], self.y_data[index]

def __len__(self):

return self.len

dealDataset = DealDataset()

train_loader2 = DataLoader(dataset=dealDataset,

batch_size=2,

shuffle=True)

#print(dealDataset.x_data)

for i, data in enumerate(train_loader2):

inputs, labels = data

#inputs, labels = Variable(inputs), Variable(labels)

print(inputs)

#print("epoch:", epoch, "的第" , i, "个inputs", inputs.data.size(), "labels", labels.data.size())

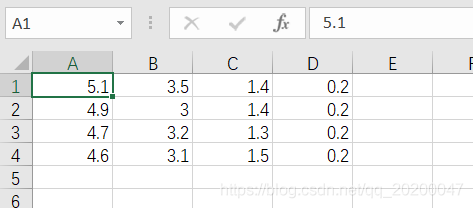

简易数据集

![]()

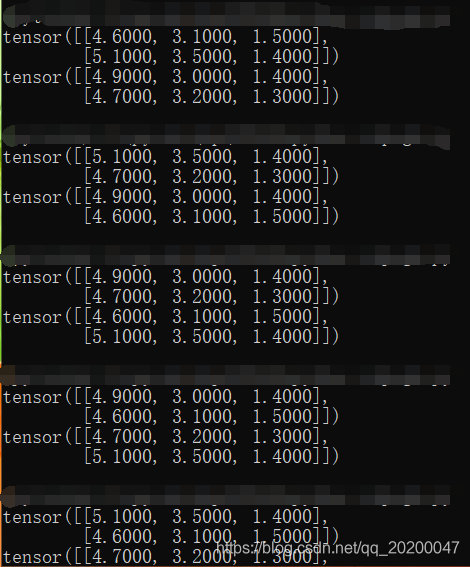

shuffle之后的结果,每次都是随机打乱,然后分成大小为n的若干个mini-batch.

以上为个人经验,希望能给大家一个参考,也希望大家多多支持hwidc。

【本文由:湖北阿里云代理 http://www.558idc.com/aliyun.html 复制请保留原URL】